What the Justice Department’s Latest Epstein File Release Tells Us About Image-Based Sexual Abuse

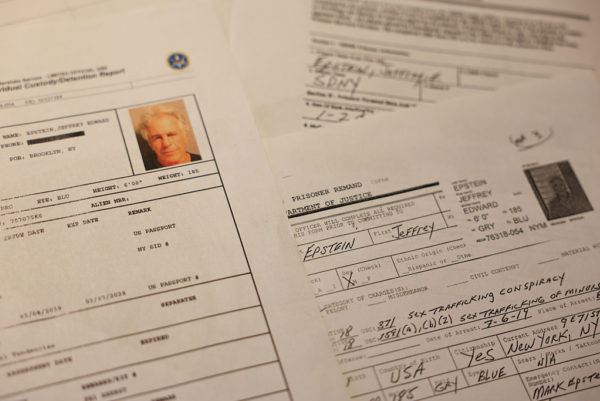

Last Friday, the U.S. Department of Justice (DOJ) published the last tranche of more than 3 million pages required by the Epstein Files Transparency Act. As first reported by The New York Times, the release included dozens of unredacted nude photos of young women — some of whom are suspected to be minors — posted on the Department’s website.

Describing the DOJ’s “scramble” to make the files public, the Times implied that the victims’ privacy, dignity and safety were collateral damage in the government’s rush job to comply with Congress, having already missed the December 2025 deadline. But this framing only does them an incredible disservice.

The release of private images of these women and girls, published without their consent, follows a widespread and dangerous pattern of minimizing digital abuse, which is routinely dismissed as less harmful because it happens online. The DOJ’s reckless disregard for its legal and ethical duty of care reflects the same attitude, resulting in the country’s premier law enforcement agency engaging in the very harm that it's entrusted by the public to prevent and prosecute: the non-consensual distribution of intimate images (NCII) of identifiable adults, and child sexual abuse material (CSAM).

Even more stunning, DOJ’s actions occurred less than a year after President Trump signed the bipartisan Take it Down Act, which makes real and AI-generated non-consensual intimate images of adults and minors a felony. The Act also requires certain tech platforms to remove images published without the subject’s consent within 48 hours of being notified — due diligence that the DOJ appears to have also failed to do.

While Deputy Attorney General Todd Blanche characterized it as “a mistake,” rather than intentional, many survivors have called out the administration’s hypocrisy: It made sure to carefully obscure the names and identities of Epstein’s correspondents in the release, while compromising the privacy of the victims.

Their attorneys have petitioned for the removal of all files from the DOJ's website, citing irreparable harm to their physical safety and mental health, with one survivor describing the release of her information “not only profoundly distressing and re-traumatizing, but it also places me and my child at potential physical risk.”

Since last Friday, a number of survivors have reported experiencing harassment from the media — and even death threats — saying that their lives have been “turned upside down” as a result.

And for the Jane Does who hadn’t publicly come forward, the government’s failure to redact their images exposed their identities.

Despite the acute increase in technology-facilitated gender-based violence — and image-based sexual abuse in particular — digital forms of violence against women and girls are routinely underreported, undercounted, and under-addressed. In the past year alone, an estimated one in 10 American women experienced some form of technology-facilitated sexual violence, according to the Centers for Disease Control and Prevention (CDC), and more than 2.3 million women have had their intimate images shared without their consent.

Given the explosion of nudify and “undressing” apps since the CDC first fielded the survey in 2024, the proliferation of AI-generated images and video depicting image-based sexual abuse will almost certainly mean an increase in future prevalence.

This misperception that harassment, threats and abuse that occur virtually are somehow less serious — a bias that society (and, too often, law enforcement) holds — isn’t only off-base, it’s dangerous. Research has established that technology-facilitated gender-based violence is seldom isolated to singular, online acts, and that particular forms of digital abuse — including stalking, doxxing (the publication of personally identifying information, such as victims’ names, addresses, or bank accounts) and threats of physical or sexual assault — are linked to real, in-person security risks. A recent report by Dr. Julie Possetti found that more than four in 10 women public figures who experience online attacks also endure offline violence.

And, as demonstrated by the Epstein files, some forms of image-based sexual abuse aren’t digital violence at all — they’re real records of sexual abuse, degradation and rape.

Even absent a connection with physical violence, survivors of online harassment and abuse experience profound psychosocial impacts and bear direct and indirect costs to their livelihoods, education and well-being from these violations of their intimate privacy — a term Dr. Danielle Citron coined to describe as essential to “developing identities, enjoying self-respect and social respect, and opening up to others so that we can forge relationships and fall in love.”

Given the real, devastating consequences of online violence and image-based sexual abuse, legal experts, survivors and advocates advise against legislation that requires an intent to cause harm as the basis for defining the offense. The DOJ’s negligence in the Epstein files release further underscores that the damages to victims are severe no matter the reason behind the action — whether the unredacted files were a mistake or, as some survivors suspect, more calculated.

Danielle Bensky, one of the survivors, shared, “I thought it was carelessness, and then I went to incompetence… And now it feels, it feels a bit deliberate. It feels like a bit of an attack on survivors.”

Either way, the events of the past week are a stark reminder of how far we have yet to come as a society to take the objectification of women and girls seriously, both online and in real life. The government’s actions, compounded by media coverage that failed to plainly name the abuse, echoes the Trump administration’s defense of X’s Grok AI chatbot generating millions of sexually abusive images as free speech.

What will it take to prioritize women’s dignity and safety over defending the disclosure — on purpose or through reckless error — of private information?

If you or someone you know has experienced image-based sexual abuse, help is available. Adults can contact the U.S.-based CYBER CIVIL RIGHTS INITIATIVE 24/7 Image Abuse Helpline at +1-844-878-2274 and visit StopNCII.org. Individuals under 18 can contact the National Center for Missing & Exploited Children at +1-800-843-5678 or visit TakeItDown.org.

Cailin Crockett is a former National Security Council director and White House Gender Policy Council senior advisor. She serves on the Advisory Committee of the Cyber Civil Rights Initiative and on California Gov. Gavin Newsom’s Tech Innovation Council. She is a policy consultant for StopNCII.org.

More articles by Category: Gender-based violence, Girls, Media, Online harassment, Violence against women

More articles by Tag: Sexualized violence, Gender Based Violence